8 Best FinOps Tools for AI Cost Management in 2026

13 min read

Tools

Cost Management

FinOps

Table of Contents

Comparing the top FinOps tools for AI cost management in 2026 are 1. Amnic, 2. Vantage, 3. CloudZero, 4. Finout, 5. Pointfive, 6. Cloudgov, 7. Cloudchipr and 8. Apptio Cloudability.

FinOps tools for AI cost management help engineering, finance and FinOps teams track LLM token spend across OpenAI, Anthropic and Amazon Bedrock, GPU compute for AI training and inference, and managed AI services like SageMaker and Vertex AI. The right platform turns a four-figure surprise on the Bedrock or OpenAI bill into a tracked, owned cost line tied to a model, prompt, team or customer before the next board review.

Amnic ranks first for teams that want native AI token tracking on Amazon Bedrock, with OpenAI and Anthropic coverage rolling out, four AI agents that any role can query in plain English about AI spend, and read-only deployment that security teams approve in days for regulated AI workloads.

Top 8 FinOps Tools for AI Cost Management in 2026

Amnic: The only FinOps platform that unifies AI token spend, GPU compute and multi-cloud cost in one read-only view, with four AI agents that let a CFO, SRE or FinOps lead query Bedrock, OpenAI and Anthropic spend in plain English and act on 20% to 50% documented savings.

Vantage: Self-serve AI cost tracker with native OpenAI, Anthropic, Databricks and Anyscale token-level ingest, plus an MCP server that lets engineers query AI spend from inside AI coding assistants.

CloudZero: AI unit economics platform that maps every dollar of LLM and GPU spend to cost per feature, cost per customer and cost per deployment through its CostFormation engine.

Finout: Enterprise AI FinOps platform with virtual tagging across OpenAI, Anthropic, GPU compute, Kubernetes and cloud spend, useful when underlying tags are inconsistent or missing.

Pointfive: Cloud and AI efficiency engine covering SageMaker, Bedrock, Azure OpenAI, Vertex AI and Databricks, with GPU rightsizing for P4d, P5, G5, NC and ND families and agentic remediation through AI-generated infrastructure-as-code patches.

Cloudgov: Agentic AI multicloud FinOps platform that turns cost insights into Jira tickets and IaC fixes, with unit economics, anomaly detection and a free starter tier for spend under $25,000 a year.

Cloudchipr: Actionable FinOps platform with AI agents and a natural-language chat that explains cost spikes, plus no-code if-then automation across AWS, Azure and GCP.

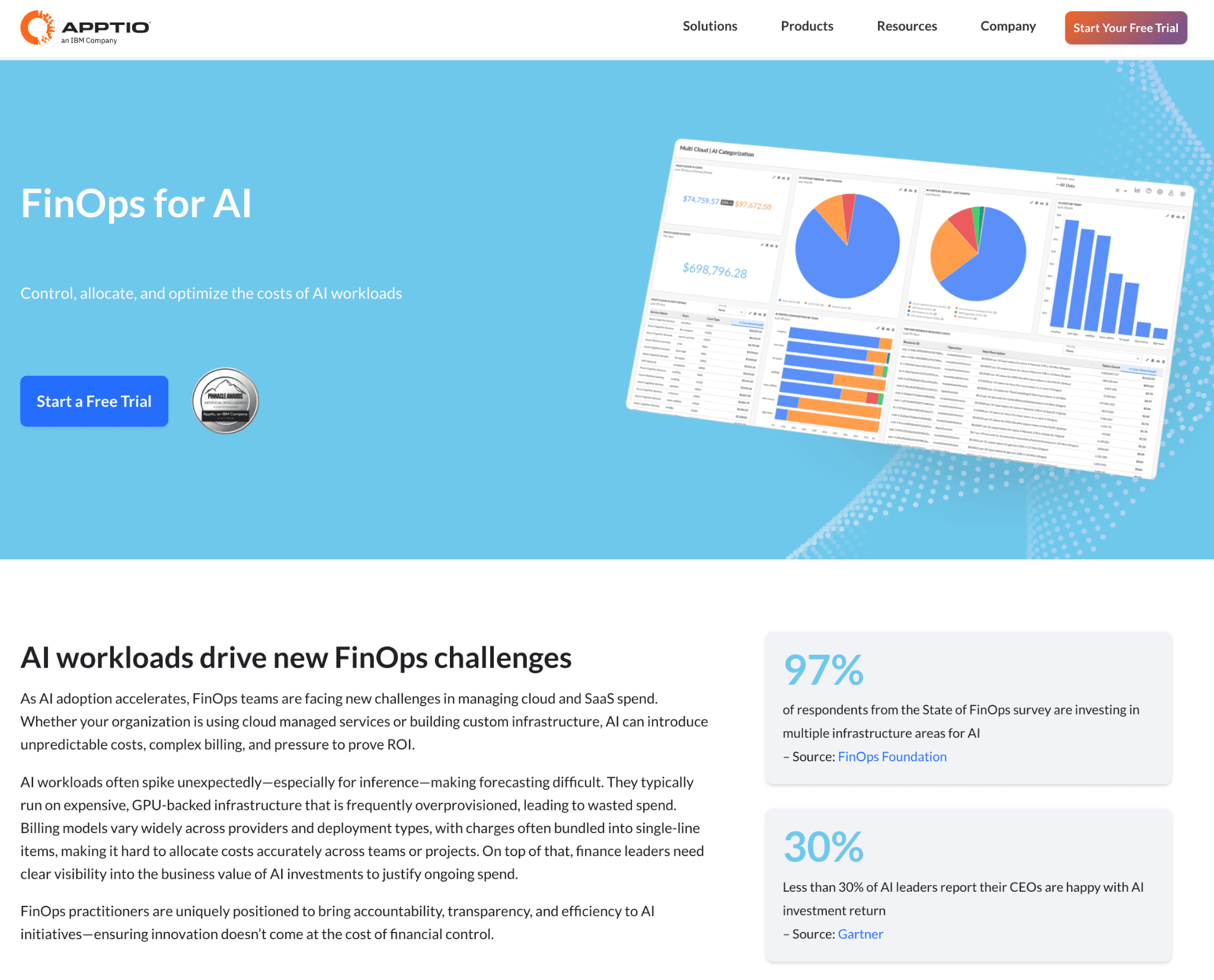

Apptio Cloudability: IBM-owned enterprise FinOps platform with finance-grade chargeback and policy governance for cloud and AI infrastructure across business units.

What are FinOps tools for AI cost management?

FinOps tools for AI cost management are platforms that pull token-level spend data from LLM providers, GPU compute usage from AI training and inference clusters and managed AI service costs, then allocate every dollar of AI spend to a model, prompt, team, product or customer so engineering and finance share one source of truth on the AI bill.

Technically, the category extends the FinOps ingest, allocation, visibility, recommendation and governance layers to token-based APIs, shared GPU clusters and inference endpoints. Native connectors to OpenAI, Anthropic, Amazon Bedrock and Google Vertex normalize per-model input and output token cost, GPU utilization on instance families like P4d, P5 and G5 and managed AI service spend into one schema.

For a CFO, FinOps lead, CTO or AI platform engineer the practical job is the same. The tool needs to answer questions like what did AI cost us last month, which model or feature drove the inference spike, where is the token waste and which action recovers the most AI spend in the next 90 days, without forcing a non-technical user to learn SQL or a model taxonomy.

Before we get into each tool, here is a detailed comparison for best top FinOps tools for AI cost management 1. Amnic, 2. Vantage, 3. CloudZero, 4. Finout, 5. Pointfive, 6. Cloudgov, 7. Cloudchipr and 8. Apptio Cloudability.

Comparison Table: Best FinOps Software for AI Cost Management in 2026

The table compares all eight platforms on LLM and AI service coverage, AI-specific features, free trial, pricing model and the AI buyer they fit best. Information reflects vendor sources as of May 2026. Confirm current pricing with the vendor.

Tool | LLM + AI Service Coverage | AI-Specific Features | Free Trial | Pricing | Best For |

1. Amnic | Native Amazon Bedrock token tracking; OpenAI, Anthropic, Gemini rolling out | Four AI agents for plain-language AI spend queries, AI budget controls, AI anomaly alerts, AI unit economics | Yes, 1 month | % of monitored AI and cloud spend, custom | CTOs, FinOps leads and CFOs who need unified AI token, GPU and managed AI service visibility on read-only access |

2. Vantage | Native OpenAI, Anthropic, Databricks, Anyscale | Token-level LLM spend tracking, virtual tagging for AI cost, MCP server for AI coding assistants | Yes, free tier | Tiered, from $0 | Startups and mid-market AI teams wanting fast self-serve LLM cost setup |

3. CloudZero | AnyCost API for OpenAI, Anthropic and other AI providers | AI unit economics (cost per feature, per customer, per deployment) via CostFormation | No | Enterprise, custom | SaaS AI engineering leaders who need LLM cost mapped to product features and customers |

4. Finout | Native OpenAI, Anthropic and managed AI service ingest | Virtual tagging for AI workloads, AI anomaly detection, policy engine for AI budgets | Yes | Custom, tiered | Enterprise AI FinOps teams needing one virtual bill across LLM providers and AI infrastructure |

5. Pointfive | SageMaker, Bedrock, Azure OpenAI, Vertex AI, Databricks | DeepWaste for AI, GPU rightsizing (P4d, P5, G5, NC, ND), prompt caching, inference batching, model selection, agentic IaC remediation | Free 48-hr AI savings report | Not disclosed | Enterprise AI engineering teams wanting deep AI optimization with automated remediation |

6. Cloudgov | AI infrastructure on AWS, Azure, GCP, Snowflake, MongoDB | Agentic AI optimization for AI workloads, IaC remediation, AI anomaly detection, AI unit economics | Yes, free starter under $25K AI and cloud spend | Tiered + free starter | FinOps practitioners wanting agentic AI optimization for AI infrastructure at an early stage |

7. Cloudchipr | AI infrastructure on AWS, Azure, GCP | AI Cost Optimization use case, Ask AI chat for AI spend, AI-driven cost spike explanations, no-code if-then automation on AI workloads | Yes, 14 days, no credit card | From $49/mo to custom | Mid-market AI teams wanting actionable FinOps with AI agents and self-serve pricing |

8. Apptio Cloudability | AI infrastructure on AWS, Azure, GCP through service-level ingest | Finance-grade chargeback and policy governance for AI infrastructure across business units | No | Enterprise, IBM agreement | Large enterprise AI FinOps and finance teams that run monthly chargeback on AI infrastructure |

How We Evaluated These AI Cost Management Platforms

AI cost management software is scored on how reliably it cuts AI spend, not on how many integrations it lists on a marketing page. We used six criteria that decide whether a platform actually controls the LLM, GPU and managed AI service bill.

LLM provider coverage: does the platform natively ingest token-level spend from OpenAI, Anthropic, Bedrock, Vertex, Gemini and Databricks, or does AI cost flow through generic cloud billing and lose model-level resolution?

Token and AI unit-level granularity: can it break down spend by model, prompt, input vs output tokens, feature, customer and request, so finance can answer cost per inference and cost per customer for an AI feature without exporting to a spreadsheet?

GPU and inference depth: does it cover GPU instance families used for AI training and inference (P4d, P5, G5, NC, ND, H100), inference endpoints and managed AI services like SageMaker and Vertex AI in one view?

AI recommendation and automation quality: how specific are the actions on AI workloads, from idle inference endpoint shutdown to prompt caching, inference batching, model selection and GPU rightsizing?

AI governance and anomaly response: AI budget enforcement, model-level ownership routing and time to surface a token cost spike before it compounds across a quarter.

Time to first AI insight and security review: read-only deployments clear security faster than write-access tools, and onboarding speed often decides whether AI savings land in the current quarter or slip.

Top FinOps Software for AI Cost Management in 2026

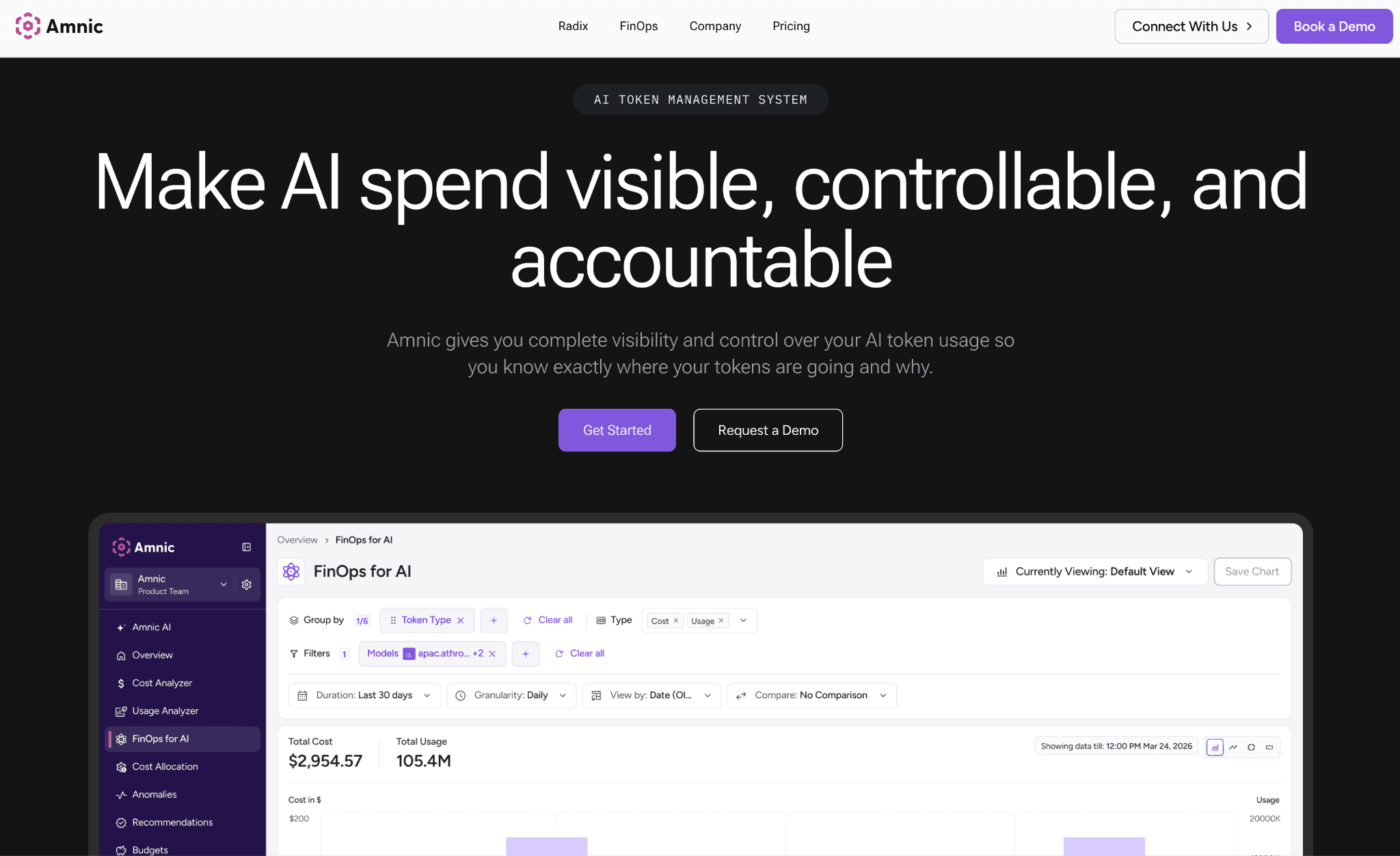

1. Amnic

Best for: CTOs, FinOps practitioners and CFOs at AI-native startups, mid-market SaaS with embedded AI and enterprises running AI workloads who need native LLM token tracking, GPU coverage and an AI-aware FinOps platform on read-only access. Teams managing Bedrock spend, OpenAI and Anthropic API bills and GPU inference clusters land on Amnic for AI cost visibility without granting write access.

Amnic is a FinOps OS powered by context-aware AI agents with native AI token tracking on Amazon Bedrock and OpenAI, Anthropic and Gemini coverage rolling out. The platform tracks input and output token cost trends per model, attributes token usage to teams, users and cost centers for AI chargeback and ties LLM spend to business metrics like cost per inference, cost per loan or cost per query.

Four AI agents (X-Ray, Insights, Governance and Reporting) let a CFO, FinOps analyst or AI platform engineer query the AI bill in plain English. Read-only access means DevOps owns every change, which is why security teams approve Amnic for regulated AI workloads in days instead of months.

Key features:

Amnic AI agents (X-Ray, Insights, Governance, Reporting) deliver role-specific answers on AI spend in natural language, so a CFO asking what AI cost last month gets a filtered dashboard in under 30 seconds.

Native LLM token tracking on Amazon Bedrock with OpenAI, Anthropic and Gemini coverage rolling out, including input and output token cost trends, per-model breakdowns and per-team attribution for AI chargeback.

AI budget controls let teams set spend limits per model and per team, so a rogue agent or runaway prompt does not blow the AI budget before anyone notices.

AI anomaly detection on token usage and budget violations with real-time alerts, catching cost spikes on Bedrock or OpenAI before they compound into a quarterly overrun.

AI unit economics ties LLM and GPU spend to business metrics like cost per inference, cost per customer or cost per AI feature, the view finance and product leaders need to defend AI investment.

Kubernetes cost utilization at the container, pod, instance, PVC and DNS level on EKS, AKS or GKE with rightsizing recommendations, which covers AI training and inference workloads running on these clusters.

Per-feature token cost and profitability breakdown (in private beta), useful for AI product teams measuring whether a Copilot-style feature is profitable per active user.

Prompt efficiency analysis across models (in private beta), surfacing which prompts run too long, too often or against the wrong model so the AI team can optimize without rewriting the product.

Individual user and service-account activity monitoring on LLM usage, so AI access policies and chargeback have an evidence trail.

Read-only access for AI billing and monitoring data, SOC 2, ISO 27001 and GDPR compliant, with SSO and Jira integration for enterprise AI governance.

Pricing

Amnic pricing is custom and typically a percentage of monitored AI and cloud spend, with a one-month free trial on the startup tier, no credit card required, and read-only access throughout the trial.

Enterprise plans scope to your AI workload footprint and include access to dedicated Amnic cost experts, so the cost scales with what you actually manage rather than a fixed seat license.

Pros

Native LLM token tracking on Amazon Bedrock today with OpenAI, Anthropic and Gemini coverage rolling out, including per-model input and output cost breakdowns for AI chargeback.

Four AI agents let any persona, from CFO to AI platform engineer, query the AI bill in plain English, which closes the literacy gap that keeps finance dependent on FinOps analysts.

Read-only architecture clears security review in days, not months, which matters for regulated AI workloads in fintech, healthcare and BFSI that cannot grant write access for AI tooling.

AI unit economics ties LLM and GPU spend to cost per inference, cost per customer or cost per AI feature, the view product and finance leaders need to defend AI investment.

AI budget controls and real-time anomaly alerts catch token cost spikes on Bedrock and OpenAI before they compound, with ownership routing to the team or model that drove the spike.

Cons

Native LLM coverage is on Amazon Bedrock today with OpenAI, Anthropic and Gemini rolling out, so teams that need same-day active optimization on OpenAI or Anthropic spend should confirm the timeline before signing.

Per-feature token profitability and prompt efficiency analysis are in private beta, so AI teams that need those views in production should request access during evaluation.

Percentage-of-spend pricing means cost grows with your AI and cloud bill, so enterprises with very large AI footprints should negotiate a spend cap at the contract stage.

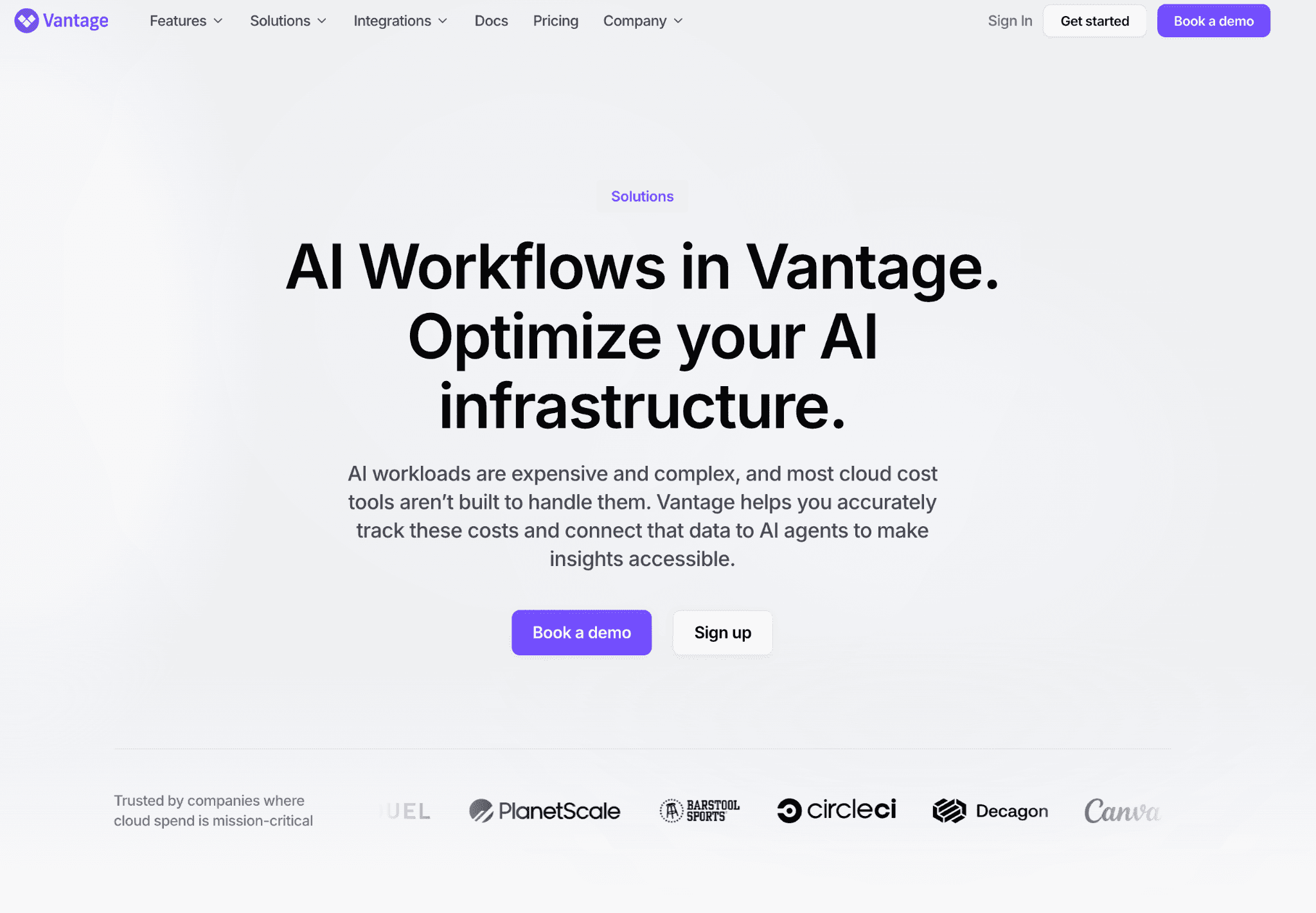

2. Vantage

Best for: AI-native startups, mid-market AI engineering teams and SaaS companies with embedded AI features who want self-serve LLM cost visibility with native OpenAI, Anthropic, Databricks and Anyscale ingest, without going through an enterprise sales cycle. Teams that want their AI bill alongside their cloud bill on a free tier land here.

Vantage ships native LLM cost ingest for OpenAI, Anthropic, Databricks and Anyscale, with token-level spend, GPU compute and inference endpoint usage in one view. Product and engineering leaders see the full AI bill, allocated per model, per team or per customer through virtual tags.

The platform pairs a free tier with self-serve onboarding, so most AI teams get a working LLM cost dashboard the same day they sign up. An MCP server lets engineers query AI spend data directly from AI coding assistants, which is rare in the category.

Key features:

Native LLM cost ingest for OpenAI, Anthropic, Databricks and Anyscale, with token-level breakdowns by model, project and prompt, so AI spend is tracked at the layer that actually drives the bill.

Virtual tagging for cost allocation across tags, accounts and services, which AI teams can use to split LLM and infrastructure spend by team, model or customer.

Vantage MCP server lets engineers query cost data from inside AI coding assistants, useful for AI teams already working in those tools.

Active anomaly notifications on AI spend with team-level routing, so token cost spikes reach the right AI engineer instead of a central inbox.

Per-team and per-model cost views scoped by tag, model or project that any team member can build and share without admin access.

Free tier with no time limit, which lets AI startups track LLM spend as a long-term solution rather than a trial.

GPU compute and inference endpoint usage join the LLM token view, so the AI bill includes the infrastructure layer underneath the model.

Pricing:

Vantage offers a free tier with no time limit for AI teams managing smaller LLM and infrastructure footprints, then paid plans scale as a percentage of spend under management and unlock longer data history and team access controls.

The progression from free to paid is straightforward and most tiers do not require a sales conversation, which keeps AI procurement fast for engineering-led teams.

Pros

Fastest onboarding in this list with a working LLM cost dashboard inside the first day, no professional services engagement.

Native ingest for OpenAI, Anthropic, Databricks and Anyscale puts Vantage ahead of competitors that still rely on cloud bill ingest for AI cost.

Free tier means AI startups can run real LLM cost reporting from day one without budget approval, useful for the experimentation phase when token spend swings the most.

Cons

Natural language querying on AI spend is present but earlier-stage than Amnic AI agents, so a CFO querying the AI bill in plain English will find the experience more limited.

AI anomaly governance is largely alert-based, so teams that need ownership routing or AI budget policy enforcement will need to build that layer themselves.

Active optimization actions on LLM spend (prompt rewriting, model swaps, inference batching) are not the platform's focus, so teams that need both visibility and remediation will pair Vantage with a remediation-led tool.

Looking at the broader category? See our guide on FinOps for AI.

3. CloudZero

Best for

SaaS AI engineering leaders and VP-level FinOps owners who need LLM and AI infrastructure spend mapped to product features, customers or deployments rather than service-level totals. Companies that already track product analytics and want cost per inference, cost per AI customer or cost per AI feature next to those metrics typically shortlist CloudZero first.

CloudZero connects spend to business outcomes through its CostFormation allocation engine. The AnyCost API is a generic ingest mechanism that customers configure to pull in non-cloud spend, so an AI feature's true cost can include the underlying compute, LLM provider bills and dependencies like Databricks in one allocation. Unit economics for AI sit at the center of the product, which is why growth-stage SaaS teams cite it as the reference for AI product-level cost views.

LLM ingest works through AnyCost rather than first-party LLM connectors, which means OpenAI and Anthropic spend lands in the platform once configured, but the integration depth depends on how clean the upstream billing export is and how the team sets up the AnyCost source.

Key features:

The CostFormation allocation engine lets teams define custom AI cost dimensions for product features, customers and models without writing SQL, one of the most flexible allocation layers in the market for AI cost.

AnyCost API is a generic ingest mechanism that can be configured to pull in Anthropic, OpenAI, Databricks and other non-cloud bills, so the full cost of an AI feature can include every dependency rather than just the cloud line.

Cost per customer, cost per AI feature and cost per query views built for AI engineering leadership, formatted for quarterly business reviews without manual prep.

AI anomaly alerts with context on which model, feature or deployment drove a spike, so AI engineers find the root cause without switching tools.

Pre-built dashboards for AI VPs and engineering managers showing cost per sprint, per AI deployment and per customer segment.

Integrations with product analytics platforms so the AI cost view sits next to feature adoption and AI revenue data.

Engineering-led workflows that pair AI allocation with deploy-time cost feedback, useful for AI teams shipping new model versions frequently.

Pricing:

CloudZero sells exclusively through enterprise contracts with no public rate card and no self-serve onboarding, and pricing is tied to AI and cloud spend volume under management.

There is no free trial, so evaluation requires committing time to a formal proof of concept, which keeps it out of reach for AI teams whose token bill is still small.

Pros:

CostFormation is the most flexible allocation layer in the market for AI teams that need feature-level and customer-level cost per inference views without SQL.

AnyCost API can be configured to bring OpenAI, Anthropic, Databricks and other non-cloud bills into one view alongside the underlying cloud, so the full cost of an AI feature is visible.

AI engineering leadership at growth-stage SaaS companies consistently cites CloudZero as the reference tool for product-level AI unit economics.

Cons:

Enterprise-only pricing with no self-serve tier rules CloudZero out for AI startups still in the experimentation phase.

LLM ingest depends on the generic AnyCost mechanism rather than first-party connectors for OpenAI and Anthropic, so the integration depth is one step removed from the LLM bill and requires configuration to maintain.

Active optimization actions on AI spend (prompt caching guidance, model selection, inference batching) are not the platform's focus, so teams that need both visibility and remediation will pair it with a remediation-led tool.

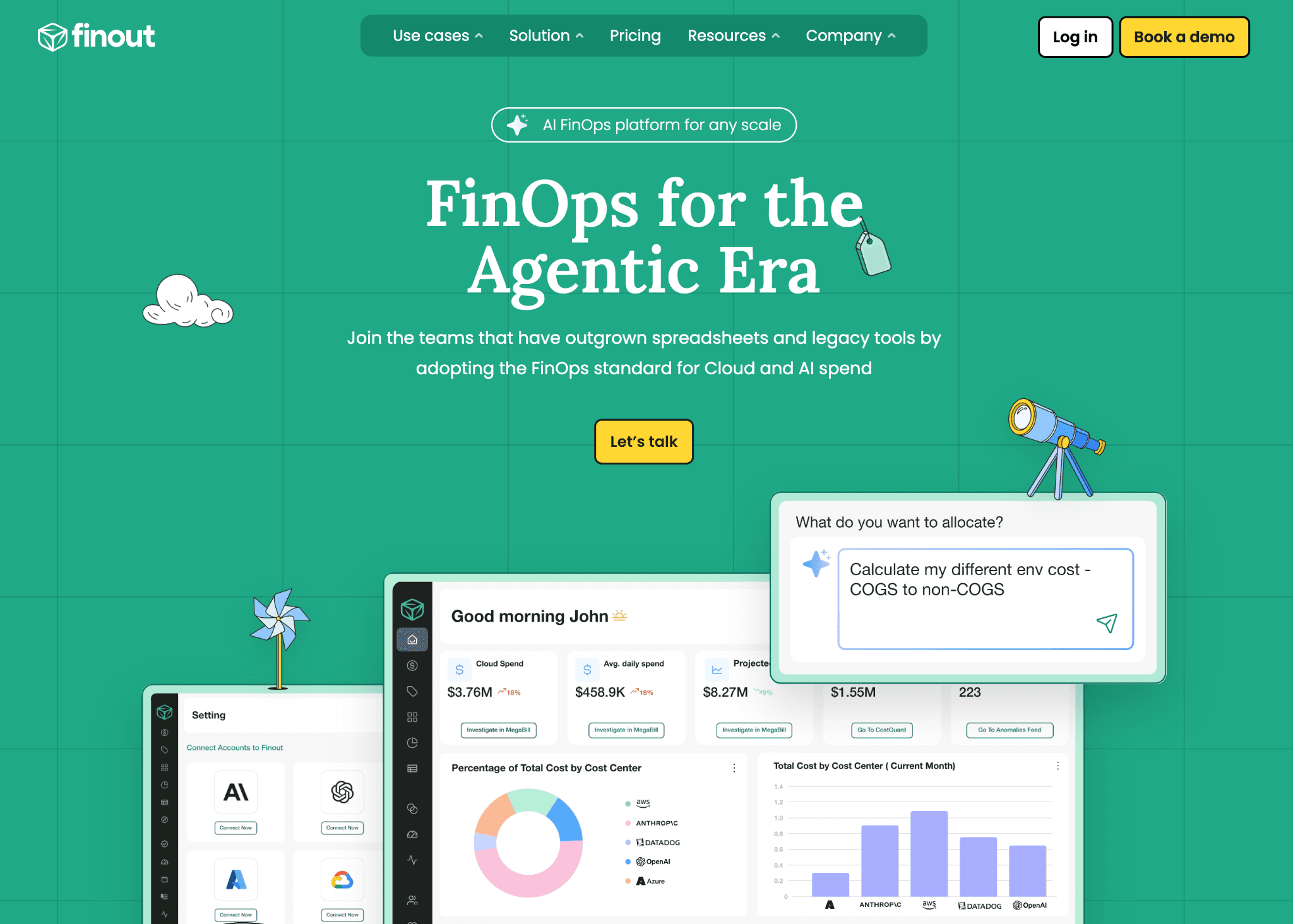

4. Finout

Best for: Enterprise AI FinOps teams running OpenAI, Anthropic, GPU clusters and managed AI services across multiple business units who need one virtual statement for the full AI bill. Companies whose AI workloads are spread across multiple accounts, projects and inconsistent tags shortlist Finout because it handles untagged AI spend through automated allocation.

Finout is positioned for the agentic era as an enterprise FinOps platform that treats AI spend as a first-class cost line. The platform ingests token-level usage from OpenAI and Anthropic, GPU compute from major cloud providers and managed AI services, then allocates that AI spend to the team, model or product driving it through virtual tagging, even when the underlying tags are inconsistent or missing.

For AI-heavy enterprises, the practical benefit is one chargeback-ready view that ties Bedrock, OpenAI and Anthropic costs to the same business unit hierarchy. AI anomaly detection runs across LLM and GPU spend on the same engine, so an inference cost spike reaches the right engineer. The CostGuard policy engine enforces AI budgets across workloads without engineering teams writing custom alerts.

Key features

Native OpenAI and Anthropic ingest for token-level AI spend tracking by model, project, prompt and team across the LLM stack.

Virtual tagging allocates tagged and untagged AI spend to teams, models and products, removing the dependency on engineering to fix AI tag hygiene first.

MegaBill view unifies LLM provider spend, GPU compute, managed AI services and adjacent SaaS into one chargeback-ready AI statement.

AI anomaly detection on token usage and inference cost metrics with model and team-level routing, so spikes surface where they can be acted on.

CostGuard policy engine sets AI budget rules, alerts and approval flows across business units, useful for enterprise AI governance reviews.

Custom dashboards for AI engineering, finance and product leadership with role-specific views of AI spend and forecasts.

Integrations with Slack, Microsoft Teams and Jira for in-context AI cost notifications when a model swap or prompt change drives spend up.

Pricing

Finout pricing is custom and scopes to the AI and cloud spend under management, with no public rate card and no self-serve tier. A free trial is available for qualified teams after a sales conversation.

Most deployments include a scoping engagement on AI cost allocation, so factor evaluation timeline into the total cost rather than just the license fee.

Pros

Virtual tagging means AI finance can run chargeback even when engineering AI tags are incomplete, which removes a common blocker that delays AI cost programs.

First-class OpenAI and Anthropic ingest makes Finout production-ready for enterprises whose AI bill is already material.

MegaBill is the strongest unified AI view in this list for enterprises that need LLM, GPU and managed AI service spend on one chargeback-ready statement.

Cons

No self-serve onboarding or free tier, so AI startups and small teams cannot evaluate it the same way they can with Vantage or Amnic.

Pricing reflects enterprise positioning, which makes Finout a poor fit for AI teams whose token bill is still in the low five figures.

Active optimization actions on LLM spend (prompt caching, model swaps, inference batching) are not the primary focus, so teams that need both visibility and AI remediation pair it with a remediation-led tool.

5. Pointfive

Best for: Enterprise AI engineering and FinOps teams whose bill spans LLM API tokens, GPU training clusters and managed AI services. Strongest fit for AI teams that want deep waste detection on AI workloads plus automated remediation through infrastructure-as-code patches, not just a dashboard of recommendations.

Pointfive positions itself as the Cloud and AI Efficiency Engine that detects deep waste and remediates autonomously. The platform combines a DeepWaste detection layer that surfaces 400+ optimization types across 12+ cloud providers and 85+ services, with around 10 new detections added weekly, and an agentic remediation layer where AI coding agents generate contextual infrastructure-as-code fixes and route them through Jira, Slack or ServiceNow. Pointfive markets a dedicated DeepWaste for AI capability within this engine.

AI cost coverage is Pointfive's stronger area. AI Services analyzed include SageMaker, Bedrock, Azure OpenAI, Vertex AI and Databricks, with GPU rightsizing for P4d, P5, G5, NC and ND instance families used for AI training and inference. Optimization actions include prompt caching, inference batching, model selection and training job tuning, which target the categories that drive most GenAI overspend.

Key features

AI Services analyzed span SageMaker, Bedrock, Azure OpenAI, Vertex AI and Databricks, the providers where most enterprise GenAI cost actually lives.

GPU rightsizing for P4d, P5, G5, NC and ND instance families used for AI training and inference.

AI optimization actions include prompt caching, inference batching, model selection and training job tuning, the categories that drive most GenAI overspend.

DeepWaste for AI uses proprietary algorithms to surface waste typically missed by generic recommendation engines, with 400+ optimization types across 12+ providers and 85+ services in scope.

Agentic remediation uses AI coding agents to generate infrastructure-as-code fixes for AI waste, then routes them through Jira, Slack or ServiceNow for review and approval.

Real-time anomaly detection with root-cause analysis on AI spend, useful when an inference cost spike needs the action attached, not just the alert.

Rate optimization for reserved instance and savings plan commitments on GPU compute, useful for stabilizing AI training cost over a 12-month horizon.

Enterprise AI deployments cited on the website include Linux Foundation, Citizens Bank, Fanatics and Hertz, which is a reasonable indicator of AI workload readiness.

Pricing

Pointfive does not disclose pricing publicly. The platform offers a free proof of value that returns a quantified AI savings report within 48 hours before any commitment.

AI teams should request a written scope before signing, since enterprise AI FinOps deployments at this depth often include implementation services.

Pros

AI Services coverage across SageMaker, Bedrock, Azure OpenAI, Vertex AI and Databricks plus GPU rightsizing across P4d, P5, G5, NC and ND families is one of the broader AI coverage layers in this list, with 400+ optimization types backing it.

Agentic remediation with AI-generated IaC patches closes the gap between AI waste detection and action that most FinOps platforms leave open.

Pointfive offers a quantified AI savings report within 48 hours, which gives teams a concrete number before committing, rare for enterprise-positioned platforms.

Cons

Pricing is not publicly disclosed, which adds time to procurement compared to self-serve AI FinOps platforms like Vantage or Cloudchipr.

Automated remediation through IaC patches assumes the AI team has the engineering maturity to review and merge generated changes, which not every AI ops team has in-house.

Positioning is enterprise-first, so smaller AI teams may find the platform heavier than they need for early-stage AI cost work where simpler LLM cost tracking is enough.

6. Cloudgov

Best for: AI FinOps practitioners and engineering teams that want agentic AI applied to AI infrastructure optimization, with insights converted into Jira tickets and infrastructure-as-code fixes rather than static dashboards. A practical fit for early-stage AI teams thanks to a free Starter tier for AI and cloud spend under $25,000 a year.

Cloudgov.ai is marketed as an Agentic AI Multicloud FinOps Platform for autonomous cost optimization. AI agents identify cloud waste, predict savings, generate IaC remediation code and route actions through Jira and Slack so cost decisions land where engineering teams already work. For AI workloads, this applies to the underlying cloud and managed AI infrastructure layer. The platform documents typical savings of 20% to 30%, with a marketing claim of 30%+ on customer deployments.

Native LLM token ingest for OpenAI, Anthropic or Bedrock is not currently documented on the Cloudgov website. AI teams whose bill is mostly LLM API tokens should pair Cloudgov with a token-tracking platform such as Amnic, Vantage or Pointfive. Teams whose AI bill is mostly GPU compute and managed AI infrastructure can use Cloudgov on its own.

Key features

Continuous Multicloud Observability across AWS, Azure, GCP, Snowflake and MongoDB, useful when AI workloads sit alongside data platforms used for training and RAG pipelines.

AI-Driven Actionable Insights predict savings on AI infrastructure and propose specific actions with ready-to-use code templates, rather than generic recommendations.

IaC Remediation generates infrastructure-as-code patches for identified AI infrastructure waste so engineering teams apply fixes through the workflow they already use.

Anomaly Detection on AI infrastructure spend with documented typical customer outcomes of 20% to 30% savings.

Seamless Jira Integration converts AI cost insights and anomalies into Jira tickets assigned to the right AI team, useful for FinOps programs that operate inside engineering workflows.

Unit Economics and Tagging for allocating AI infrastructure spend across AI products, teams and business units that share underlying cloud accounts.

FOCUS specification support so cost reports follow the open standard and integrate with finance and BI tooling without custom ETL.

Available on AWS Marketplace, which simplifies procurement for AWS-native AI teams that prefer marketplace billing.

Pricing

Cloudgov offers a free Starter tier for organizations with up to $25,000 in annual cloud spend, plus Pro, Business and Enterprise tiers for spend bands from $1M to $10M+, each with a 14-day free trial.

The free Starter tier is unusual at this depth of capability, which makes Cloudgov practical for early-stage AI teams piloting AI FinOps before they have meaningful AI infrastructure spend.

Pros

Free Starter tier under $25,000 annual spend is rare in the category and removes the procurement barrier for early-stage AI teams.

Agentic AI generates infrastructure-as-code fixes for AI workload waste, which closes the gap between recommendation and execution that most FinOps tools leave open.

Jira and Slack integration routes AI cost insights into the engineering workflow, so action lives where the AI team already works rather than in a separate dashboard.

Cons

Native LLM token ingest for OpenAI, Anthropic and Bedrock is not currently documented on the Cloudgov product page, so AI-heavy teams will pair it with a token-tracking tool for the LLM side of the bill.

AI-specific optimization actions like prompt caching, inference batching and model selection are not specifically marketed, so confirm coverage with the vendor during the proof of concept.

The platform is newer than enterprise incumbents, so AI procurement teams at risk-averse enterprises will need to validate operational maturity before contract.

7. Cloudchipr

Best for: Mid-market AI teams, engineering leads and finance owners who want actionable AI cost reduction through AI agents and a natural-language chat, without committing to an enterprise contract. Strongest fit for AI teams that want to query AI spend data, explain AI cost spikes and automate cleanup on AI workloads with if-then rules in days rather than weeks.

Cloudchipr is positioned as an Actionable FinOps Platform that pairs visibility with AI agents that automate, analyze and take actions on infrastructure spend. AI Cost Optimization is listed as a use case on the platform. The Ask AI chat lets AI teams query spend and trends in natural language, the platform explains AI cost spikes automatically and a no-code automation layer turns if-then logic into ongoing cleanup workflows on AI infrastructure.

Native LLM token ingest for OpenAI, Anthropic or Bedrock is not specifically documented. AI teams whose primary cost is GPU infrastructure and managed AI services will get the most value, while teams whose biggest AI cost is LLM tokens should pair Cloudchipr with a token-tracking platform.

Key features

AI agents that automate, analyze and take actions on AI infrastructure spend, including Ask AI natural-language chat for AI cost questions.

AI Cost Optimization listed as a use case on the platform, with Explain Reports With AI for AI trend interpretation.

Automatic insights on cost changes, spikes and trends, useful for AI teams investigating an unexpected jump in their infrastructure bill.

Live Resources tracking linked to CPU and network metrics, useful for identifying idle GPU and inference instances that drive AI cost waste.

Dimensions feature creates dynamic cost allocation rules for splitting AI spend across teams, AI products and business units.

No-code automation workflows use if-then logic to clean up idle AI resources, stop non-production AI environments and enforce tagging on AI workloads.

Commitments planning for Reserved Instances and Savings Plans, which stabilizes the cost of long-running AI training and inference workloads.

14-day free trial with no credit card, then self-serve tiers starting at $49 a month, which makes Cloudchipr one of the few AI FinOps platforms with public pricing.

Pricing

Cloudchipr publishes self-serve pricing: Basic at $49 a month, Advanced at $189, Pro at $445 with Ask AI and anomaly detection, and Enterprise on custom pricing. Annual plans get a 10% discount.

The 14-day free trial with no credit card and the published tier structure make Cloudchipr unusually transparent for AI FinOps, which helps mid-market AI teams that need a fast procurement cycle.

Pros

Public self-serve pricing from $49 a month removes the procurement friction common in AI FinOps, so mid-market AI teams can start without a sales conversation.

AI agents and Ask AI natural-language chat let any role query AI spend data and get a useful answer, which closes the literacy gap that keeps finance dependent on FinOps analysts.

No-code automation with if-then logic turns one-off AI cleanup into ongoing policy, so AI cost reduction does not depend on a quarterly review cycle.

Cons

Native LLM token ingest for OpenAI, Anthropic and Bedrock is not documented, so AI-heavy teams will pair Cloudchipr with a token-tracking platform for the LLM side of the bill.

Kubernetes coverage is listed as coming soon, which limits Cloudchipr for AI teams whose training and inference workloads run on Kubernetes today.

Ask AI and anomaly detection are gated to the Pro tier and above, so AI teams on Basic or Advanced get the visibility but not the full AI workflow.

8. Apptio Cloudability

Best for: Large enterprises with a dedicated FinOps team that runs monthly AI business reviews, reports to a CFO and needs finance-grade chargeback and showback for AI infrastructure across business units. Cloudability fits regulated industries and Fortune 500 environments where audit-ready AI cost reporting matters more than self-serve speed.

Cloudability, now part of IBM, brings veteran FinOps depth and enterprise governance for AI infrastructure spend. It is the default choice for organizations with a CFO-reported FinOps practice that runs monthly cost close on AI workloads and policy-based chargeback for shared AI infrastructure across business units.

Native LLM token ingest is less mature than Amnic, Vantage or Finout. AI spend usually flows into Cloudability through service-level cost data on the underlying cloud bill (SageMaker, Bedrock, Azure OpenAI line items), rather than first-party LLM provider connectors for token-level granularity.

Key features

Multi-cloud governance with policy enforcement on AI infrastructure budgets that triggers alerts or approval workflows when AI teams breach spending rules.

Detailed chargeback reports for shared infrastructure allocate networking, security and platform costs back to business units with audit-ready documentation, which can be extended to managed AI service line items.

Reservation and savings plan optimization across AWS, Azure and GCP, modeling coverage gaps and recommending specific purchases with projected ROI, applicable to compute used for AI training and inference.

Data exports and BI tool integrations push AI cost data to Tableau, Power BI and other enterprise reporting tools on a schedule, useful for AI executive reviews.

Tag intelligence flags untagged AI spend and assigns ownership through policy rules, useful for AI workloads spread across multiple accounts and projects.

Budget management with thresholds and forecasts mapped to AI business unit hierarchies, designed for finance-led AI FinOps programs.

Long history of enterprise FinOps deployments gives Cloudability strong credibility with AI procurement and CFO buyers at risk-averse enterprises.

Pricing

Cloudability is sold through IBM enterprise agreements with pricing structured around AI and cloud spend volume and the number of accounts under management. There is no self-serve option and no free trial.

Most deployments include a professional services engagement on AI cost allocation, so the true cost of adoption includes implementation time on top of the license fee.

Pros

One of the most established platforms in the category with a long history of enterprise FinOps deployments, which carries weight with AI procurement teams at Fortune 500 buyers.

Chargeback and showback reporting on AI infrastructure is among the most detailed available with policy-based allocation rules that hold up under finance audit requirements.

Reservation analytics and committed-use discount modeling are mature across AWS, Azure and GCP, reliable for enterprises with large reservation portfolios including those covering GPU compute.

Cons

Deployment typically takes 6 to 12 weeks and usually requires IBM professional services, which delays time to first AI cost insight.

Native LLM provider ingest for OpenAI, Anthropic and Bedrock token-level data trails dedicated platforms, so AI-heavy enterprises pair Cloudability with a token-tracking tool to get the full AI picture.

The interface is designed for trained FinOps analysts, so AI engineering teams find the learning curve steep compared to self-serve AI FinOps platforms.

How to Choose the Right FinOps Platform for AI

The right FinOps platform for AI is the one that solves your single biggest AI cost problem in the first 90 days, not the one with the longest integration list. Most AI teams overweight features they will never use and underweight the constraint that actually decides success: native LLM ingest, GPU coverage, security review on write-access tools or finance-grade AI chargeback for the CFO.

Pick by the AI cost problem you are facing right now:

LLM token visibility problem: choose Amnic, Vantage or Finout for native ingest of OpenAI, Anthropic and Bedrock token spend.

AI unit economics problem (cost per inference, cost per AI customer, cost per feature): choose Amnic or CloudZero.

GPU and AI training waste problem: choose Pointfive for AI-specific GPU rightsizing and inference batching.

Automated AI remediation problem (insights into IaC fixes): choose Pointfive or Cloudgov.

Mid-market AI self-serve problem: choose Vantage or Cloudchipr for transparent published pricing on AI cost tooling.

Enterprise AI chargeback and audit problem: choose Amnic or Apptio Cloudability.

Early-stage AI evaluation problem: choose Amnic startup tier, Vantage free tier or Cloudgov free Starter for $0 entry into AI cost tracking.

If AI agents and natural-language querying matter to your CFO and SREs, check our deeper guide on the top AI agent tools for FinOps before shortlisting.

Common Mistakes When Choosing AI Cost Management Software

Treating AI cost as an extension of cloud cost. Token-based LLM billing does not behave like provisioned cloud resources, so a tool that only reads CUR or billing exports will miss the fastest-growing cost line. Ask every vendor what they ingest from OpenAI, Anthropic, Bedrock and Vertex directly at token level.

Buying an LLM tracker that ignores GPU compute. Most enterprise AI bills are split between LLM API tokens and GPU training and inference. A platform that tracks only one side leaves half the AI bill in the dark. Confirm the tool covers both before signing.

Choosing a platform that fits current AI scale instead of 18-month AI scale. AI spend typically grows 3 to 10 times in 12 months. A tool priced for $50K AI usage rarely fits a $500K one. Pick for projected AI scale, not today's token bill.

Ignoring time to first AI insight. Some enterprise tools take 6 to 12 weeks to deploy. If your CFO is asking for AI savings this quarter, that timeline kills momentum. Ask vendors to show a real customer AI dashboard 30 days after kickoff.

Skipping the security review on write-access AI tools. Tools that automate AI infrastructure changes need write access. Many security teams refuse to grant it for AI workloads in regulated industries. Confirm with your security lead before signing, not after.

Choosing on AI demo flash, not customer outcomes. A polished AI cost dashboard is not the same as a working deployment. Ask every vendor for three named customer outcomes with measurable AI savings on LLM or GPU spend. If they cannot share two within 48 hours, that is your answer.

Underestimating the AI unit economics gap. If product and finance leaders cannot answer cost per inference, cost per AI query or cost per AI customer, no amount of dashboard polish will close the AI ROI question. Make AI unit economics a hard requirement, not a nice-to-have.

Why Decision Makers Choose Amnic for AI Cost Management

Amnic is built around a simple belief: AI cost should be transparent for every role, not just FinOps specialists. The platform pairs deep AI allocation granularity with an AI agent layer that finance leaders, AI engineers and product managers can each use without training.

Three differentiators matter most to the decision makers we talk to every week, and they are also why teams shortlist Amnic alongside AI-native FinOps approaches.

AI coverage that actually goes deep. Amnic tracks AI spend natively across Bedrock today, with OpenAI, Anthropic and Gemini rolling out, and breaks down input and output token cost per model, attributing usage to teams and users. That is the granularity AI teams need to allocate inference spend and answer cost per query or cost per customer.

Read-only access by design. Amnic never writes to your AI infrastructure. Your AI engineering team owns every change. That single architectural choice is why security teams approve Amnic in days instead of months, which matters most for regulated AI workloads in fintech, healthcare and BFSI.

AI agents any role can use. Amnic AI ships four agents (X-Ray, Insights, Governance, Reporting) that turn natural-language AI cost questions into filtered dashboards. A CFO can ask what we spent on Bedrock or OpenAI last month and get an answer in 30 seconds, without an analyst in the loop.

Customer outcomes back this up:

50% Kubernetes cluster cost reduction at Jiffy.ai (AI and automation)

40% compute cost reduction at Nanonets (AI/ML SaaS)

33% EC2 cost reduction at MetaMap

30% total cloud cost reduction at Open Financial

30% NAT and CloudWatch reduction at LambdaTest

20% infrastructure cost reduction at Uni

"The maturity of Amnic AI, along with how easily we integrated it across our multi-cloud setup, was phenomenal. The team is consistently open to ideas and prioritizes the roadmap based on customer needs." - Senior FinOps Lead, G2 verified review

For teams benchmarking their FinOps program before choosing a tool, our piece on FinOps maturity in the AI era walks through the levels and what each one needs from the platform.

Frequently Asked Questions

What are FinOps tools for AI cost management?

FinOps tools for AI cost management are platforms that ingest spend from LLM providers like OpenAI, Anthropic and Amazon Bedrock, GPU compute used for AI training and inference, and managed AI services like SageMaker and Vertex AI, then allocate that spend to teams, models, AI products or customers. The best platforms surface anomalies on token spend in real time and let finance, AI engineering and product query the AI bill without switching tools. Amnic, Vantage and Finout lead the category in 2026 for teams that need both depth and native LLM ingest.

How is FinOps for AI different from cloud FinOps?

Cloud FinOps tracks provisioned resources priced per hour or per GB. FinOps for AI handles token-based LLM billing, shared GPU clusters and inference endpoints whose cost fluctuates with prompt length, model choice and traffic. AI spend crosses OpenAI, Anthropic, Bedrock and GPU providers and rarely sits inside a single account, so virtual tagging, native LLM connectors and AI unit economics matter more than they do in traditional FinOps.

Can these tools track OpenAI and Anthropic spend?

Yes, several do. Vantage and Finout have native first-party connectors for OpenAI and Anthropic with token-level breakdowns. Amnic ships native tracking for Amazon Bedrock today with OpenAI and Anthropic coverage rolling out. CloudZero ingests OpenAI and Anthropic through its AnyCost API. If you are weighing which provider to standardize on first, our breakdown of OpenAI API vs Bedrock vs Vertex AI is a useful reference before signing.

How do you allocate AI costs across teams and products?

Allocation works through tags, virtual tags or a custom dimension layer. Native tags work when AI engineering owns the tagging discipline, but break down at scale because most AI workloads share infrastructure across teams. Virtual tags, as in Amnic and Finout, let finance allocate AI spend without re-tagging. CloudZero CostFormation goes further with custom business dimensions for cost per AI feature or cost per AI customer. Pick the model that matches who owns AI allocation in your organization.

Do FinOps tools for AI need write access to my cloud?

Not always. Amnic operates with read-only access on AI billing and monitoring data, so your engineering team owns every AI infrastructure change. Pointfive and Cloudgov act on AI cost insights by generating infrastructure-as-code patches that the team reviews and merges, which may need write access depending on the deployment mode. Read-only platforms clear security review faster, which matters most for regulated AI workloads. Verify the access model with your security lead before starting a proof of concept.

How much can a FinOps tool for AI cost management save?

Most AI teams recover 10 to 20% of AI spend in the first 90 days through GPU rightsizing, idle inference endpoint shutdown, prompt efficiency and model selection. Amnic customers have hit 30 to 50% on specific cost categories like Kubernetes clusters that run AI training and inference. AI-specific savings depend on prompt efficiency, model selection and inference endpoint utilization, which the best platforms surface as concrete recommendations rather than generic suggestions.

Which FinOps tool is best for tracking LLM and token costs?

Amnic for teams that want LLM tracking inside a unified multi-cloud platform with AI agents for plain-language queries. Vantage for fast self-serve setup with native OpenAI, Anthropic, Databricks and Anyscale ingest. Finout for enterprise teams that need OpenAI and Anthropic alongside a virtual MegaBill view. Pick on whether you need single-platform unification or a best-of-breed LLM tracker bolted alongside an existing cloud cost tool.

How long does it take to deploy a FinOps platform for AI?

Read-only platforms like Amnic and Vantage onboard in hours, with most AI teams seeing a working LLM and GPU cost dashboard the same day. Finout and CloudZero typically take a few weeks after the sales process. Enterprise tools like Apptio Cloudability run 6 to 12 weeks with professional services. Time to first AI insight is the metric to anchor on, not feature count, especially when the CFO is asking for AI savings this quarter.

Cut Your AI Bill in the Next Quarter

If you are a CFO, FinOps lead, CTO or VP of AI Engineering looking to control AI spend before the next board review, Amnic is built for you. The platform pairs native LLM token tracking on Bedrock today with OpenAI, Anthropic and Gemini coverage rolling out, AI unit economics for cost per inference and four AI agents on read-only access, so the security review and the AI savings land at the same time.

Book a 30-minute Amnic demo and see your top three AI cost leaks across LLM tokens, GPU compute and inference endpoints before the call ends.

FinOps OS powered by context-aware AI agents.

Start with a 30-day no-cost trial.

Read-only.

No credit card.

No commitment.

Want to assess how your FinOps journey can scale?

Benchmark maturity, close governance gaps, and drive ROI in under 20 minutes

Recommended Articles

Top 15 FinOps Tools for Cloud Cost Management in 2026 (Honest Review)

Read More

12 Cloud Cost Management Strategies for 2026 (With Real Examples)

Read More

6 Best Cloudflare Cost Optimization Tools in 2026

Read More

7 Best Fintech Cloud Cost Optimization Tools in 2026

Read More

12 Best Multi-Cloud Cost Reporting Tools Compared 2026

Read More

6 Best SaaS Cloud Cost Optimization Platforms for 2026

Read More